Agent Economy: Why AI Agents Need Infrastructure

Agent Economy: Why AI Agents Need Infrastructure

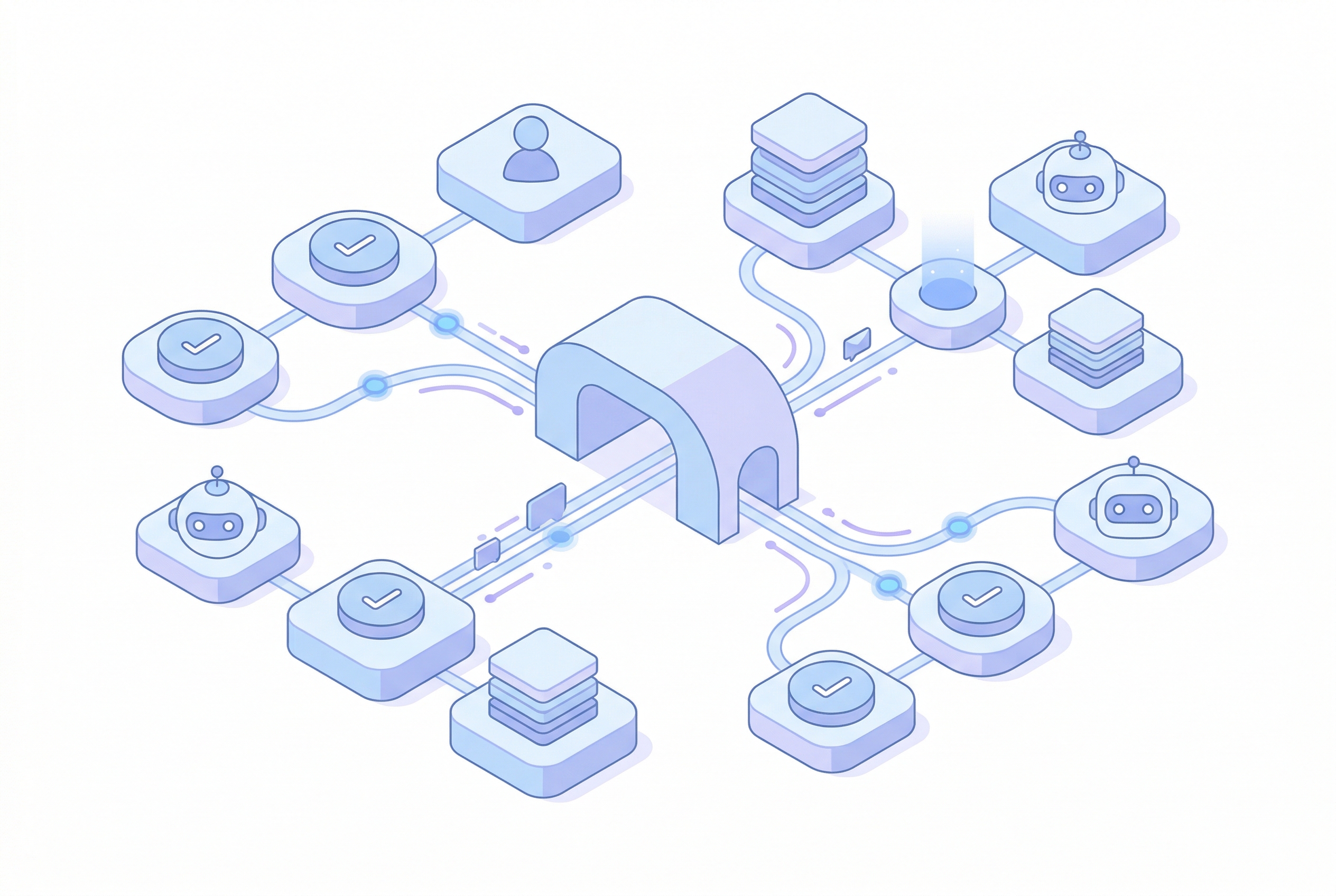

AI agents are arriving faster than the infrastructure around them, and that gap is starting to show. We have agents writing code, reviewing contracts, triaging support queues, reconciling operations, and making decisions across systems. What we do not have yet is a mature foundation for how those agents communicate, identify themselves, remember context, stay within policy, and leave behind an audit trail anyone can trust.

I keep coming back to the same thought: the agent economy will not fail because the models are weak. It will fail because too many teams are building clever behavior on top of missing rails.

The model is not the infrastructure

A strong model can reason, draft, summarize, and decide. That is useful, but it is not the hard part anymore.

The hard part is what happens after the model decides it needs to do something. Who is it allowed to contact. How does the other side know who it is. What memory can it carry forward. Which policy boundary applies in this thread but not that one. How do you reconstruct the decision later when legal, security, or finance asks what happened.

This is why I think people sometimes overestimate agent maturity. The intelligence layer improved quickly. The operational layer did not.

Communication rails come first

Agents need somewhere to talk.

That sounds obvious, but it is still messy in practice. A lot of agents are trapped inside bespoke integrations or one-shot tool calls. That is fine for simple automation, but it is not enough for real collaboration. If one agent needs to negotiate with another, hand off a task, or keep a multi-turn thread alive, it needs a communication rail designed for that job.

This is exactly why projects like A2A matter. The A2A maintainers describe it as an open protocol for communication and interoperability between opaque agentic applications. I think that framing is right. Agents need a common language, not endless custom glue.

MCP matters too, for a different reason. It standardizes how AI applications connect to external systems. That means tools, data, and workflow access do not have to be reinvented every time. A2A and MCP are not the same thing, and I do not think they should be. One helps agents talk to agents. The other helps agents use the world around them.

Both are early signs that the industry has realized the same thing: raw model capability is not enough.

Identity is where it gets serious

Once agents can communicate, identity becomes the next hard problem.

I do not mean a generic API key. I mean a verifiable answer to a simple question: who exactly is this agent, and on whose behalf is it acting.

That is no longer a niche concern. NIST said it plainly in its February 2026 concept paper on Software and AI Agent Identity and Authorization: the benefits of agents cannot be realized without understanding how identification, authentication, and authorization apply to them. I think that is one of the clearest government signals we have seen so far.

Without identity, every agent interaction becomes ambiguous. A receiving system may know that a request came from some valid service, but not whether the specific agent is authorized, whether its role is current, or whether the message should be trusted. That is not a good enough standard for procurement, support, compliance, or money movement.

Memory needs boundaries, not just persistence

People talk about memory like it is automatically a feature. I am not convinced.

An agent that remembers everything is not necessarily better. It may just be harder to control. Good memory infrastructure is not "store more context forever." It is scoped memory. Relevant memory. Revocable memory. Memory with access boundaries.

I have seen teams treat memory like a giant convenience layer, and then act surprised when sensitive context bleeds into places it should never reach. If an agent is working across customers, departments, or organizations, memory management becomes a security and governance problem very quickly.

That means the infrastructure has to answer basic questions. What can be retained. For how long. Under which identity. For which task. With whose approval. If you cannot answer those questions, you do not have agent memory. You have liability with embeddings.

Policy boundaries have to be operational

The same thing is true with policy.

A lot of agent systems still treat policy as a prompt. I think that is too fragile. Prompts matter, but operational policy cannot live only in natural language instructions inside a model context window.

Real policy boundaries need enforcement points. Hold messages from unknown senders. Require approval for external organizations. Limit certain actions to draft mode. Block sensitive data exfiltration. Escalate high-risk actions to a human. These are infrastructure decisions, not copywriting decisions.

That is also where runtime security becomes necessary. If an agent is reading untrusted input from email, the web, or another agent, then prompt injection and instruction override move from theory into operations. This is why I built InjectionGuard into the AgentTrust stack. I do not think safe agent behavior can rely on one well-written system prompt and good intentions.

Auditability is not optional in the agent economy

If agents are going to do meaningful work, somebody will eventually ask for the record.

Not the summary. The record.

Who sent the message. What did it say. What policy applied. Was it verified. Was it escalated. Who approved it. What changed between turn one and turn seven. Can you prove it now, not just describe it from memory.

That is why I keep insisting on auditable infrastructure. The homepage for AgentTrust says it in a way I like: every message signed, every participant verified, humans in control. Whether you use my stack or not, that should be the standard. If agents are going to operate across organizations, auditability has to be built in from the start.

Coordination is the real shape of the agent economy

The future is not one super-agent doing everything. I do not think that model survives contact with real organizations.

The more likely shape is coordination: specialized agents with different roles, different boundaries, and different trust levels. One agent can gather data. Another can negotiate. Another can review policy. Another can escalate for approval. That is closer to how teams already work.

Which is why infrastructure matters so much. Coordination is not magic. It needs communication rails, identity, bounded memory, policy enforcement, audit logs, and trust signals that survive handoffs.

That is the boring layer, and I mean that as a compliment. Good infrastructure should feel boring. Predictable. Legible. Hard to misuse.

We are still early. The agent economy is real, but the infrastructure is immature. That is not a reason to wait. It is a reason to build carefully.

If this resonates, I would love to hear from you.

FAQs

What is AI agent infrastructure?

AI agent infrastructure is the operational layer that lets agents work safely and reliably at scale. It includes communication rails, identity, authorization, memory controls, audit trails, and coordination mechanisms.

Why is identity so important for AI agents?

Because an API key alone does not tell you which agent is acting, what organization it belongs to, or what it is authorized to do. As soon as agents interact across teams or organizations, identity becomes a trust requirement.

What is missing in the current agent economy?

The biggest gaps are not model quality. They are communication standards, verifiable identity, policy enforcement, scoped memory, and auditability. That is the foundation mature agent systems still need.